Table of Contents >> Show >> Hide

Algorithmic trading has a funny way of sounding futuristic and boring at the same time. It is all code, speed, logic, and apparently endless acronyms. But when the Financial Conduct Authority publishes findings on algorithmic trading controls, the story is not really about robots in suits placing trades at warp speed. It is about whether firms can keep their systems, people, and governance from turning a clever trading strategy into a very expensive public lesson.

The FCA’s latest findings make one message crystal clear: strong algorithms are not enough. A firm also needs strong documentation, strong oversight, strong testing, and strong market abuse surveillance. In other words, the machine may be fast, but compliance still has to know where the brakes are.

This matters because algorithmic trading now sits at the center of modern market structure. It supports liquidity, narrows spreads, and helps connect fragmented venues. At its best, it makes markets more efficient. At its worst, it can magnify errors, accelerate bad behavior, and create the kind of disorder that leaves everyone asking the same question: who approved this, and why was nobody watching?

What the FCA actually found

The FCA’s review was aimed at principal trading firms and focused on compliance with the UK version of MiFID RTS 6, the rule set that governs organizational requirements, testing, deployment, monitoring, and risk controls for algorithmic trading. The regulator did not create new rules. That is an important point. This was not a surprise regulation parachuting in from the sky. It was a reminder that the rules already exist, and that some firms still need to tighten the bolts.

The findings were split into four big areas: governance, development and testing, risk controls, and market abuse surveillance. Across those categories, the FCA saw examples of good practice, but it also found a lot of variation in quality. Some firms looked organized, technically capable, and properly supervised. Others looked more like they had built a race car and forgotten to install a dashboard.

Governance: the first control is not code, it is accountability

One of the most important takeaways from the review is that governance still sits at the heart of algorithmic trading compliance. The FCA found that some firms had out-of-date policies, unclear processes, incomplete self-assessments, and poor documentation. That may sound like paperwork trouble, but in markets, paperwork trouble often becomes conduct trouble.

The regulator also highlighted a recurring weakness in the role of compliance. In the stronger firms, compliance staff had enough technical understanding to challenge trading behavior and engage meaningfully with algorithmic processes. In weaker firms, compliance teams lacked the technical depth to ask the right questions. That gap matters. When compliance cannot challenge the business, governance becomes theatre.

The FCA also emphasized algorithm inventories. A strong inventory does more than list names of models. It explains what each algorithm is supposed to do, who owns it, who is approved to operate it, which markets it touches, and what risk parameters apply. Without that, a firm may have plenty of technology but very little control.

Testing: if you only test for performance, you are missing the point

Testing was another major theme. The FCA found that many firms carried out required conformance testing, and some went beyond the basics. That is the good news. The less cheerful news is that some firms still had poorly defined procedures, weak records, and limited evidence that testing was truly robust.

The regulator’s message here is bigger than “run more tests.” It is that firms need testing frameworks that reflect real market conditions, including stress. Simulation testing should not be treated like a ceremonial box-tick before launch. It should be a real challenge to the algorithm’s behavior under pressure, volatility, unusual liquidity, and unexpected interactions.

The firms that impressed the regulator tended to run broad scenario testing, refresh stress cases using more recent market events, and treat testing as an ongoing discipline rather than a one-time pre-launch ritual. They also thought about conduct risk during development, not just after deployment. That distinction is crucial. A strategy can be technically functional and still create market integrity problems.

The FCA also raised concerns about firms using third-party algorithms without fully understanding how those models were built or how they behaved. Buying a vendor solution does not buy immunity. Outsourced technology can still create in-house liability.

Risk controls: the market likes speed, but regulators like kill switches

RTS 6 is built around the idea that automated trading requires automated restraint. That means pre-trade controls, post-trade controls, real-time monitoring, capital and credit thresholds, and the ability to switch algorithms off when necessary.

The FCA found that many firms had clearly defined suites of pre-trade controls and, in stronger cases, implemented them at the internal server level so problematic orders never left the firm’s gateway. That is exactly the kind of architecture regulators want to see. Bad orders are much less dramatic when they die quietly inside your own walls.

Still, the review found weak ownership and patchy compliance oversight in some firms. In plain English, people were not always sure who owned particular controls or how those controls were governed. That is dangerous, especially in fast markets. A control no one owns is usually a control no one fixes.

This is where the FCA’s findings line up with broader regulatory thinking in both the UK and the United States. U.S. securities regulators have long warned that small failures in automated systems can spread rapidly across interconnected markets. The logic is simple: if trading happens at machine speed, weak controls fail at machine speed too.

Market abuse surveillance: alerts are useful only when humans can keep up

Perhaps the most quietly powerful section of the FCA review was the discussion of market abuse surveillance. Many firms used in-house systems tailored to their trading style, and some had clear escalation pathways, well-calibrated alert logic, and strong review processes. Those firms generally looked more mature.

But others had not invested enough in surveillance technology or governance. Some lacked formal procedures for investigating alerts. Some faced significant resource pressure, with too few staff handling too many cases. That creates two problems at once: a conduct risk problem and a management problem. An alert backlog is not just an operational inconvenience. It is a warning that surveillance may not be keeping pace with trading activity.

This point connects directly with earlier FCA work. In Market Watch 79, the regulator described real surveillance failures caused by bad data, poor implementation, and broken alert logic. In some cases, firms were unaware of defects for months, and in extreme situations, for years. That is not just a software issue. It is a governance failure wearing a technical costume.

Why these findings matter beyond the UK

The FCA’s publication is a UK review, but the underlying message is global. Algorithmic trading is not confined to one country, one asset class, or one regulatory style. The same tensions show up everywhere: firms want speed, scale, and automation, while regulators want resilience, accountability, and market integrity. Good firms need both.

That is why the FCA’s findings resonate so strongly. They echo lessons that U.S. regulators have been emphasizing for years. The SEC’s action against Knight Capital remains one of the most famous examples of what happens when code deployment, testing, and risk controls fail at the same time. In that case, a defective deployment process helped trigger millions of unintended orders, massive unwanted positions, and losses that became part of modern market folklore.

Meanwhile, U.S. commodities enforcement has also shown that algorithms are not only a source of operational risk but also a conduct risk. Manipulative strategies can be encoded just as easily as helpful ones. An algorithm is not moral. It just does what it is told, which is a lovely feature until someone tells it the wrong thing.

Real examples that explain the FCA’s concern

Citigroup’s trading controls failure

The FCA’s 2024 fine against Citigroup Global Markets Limited offered a vivid reminder that primary controls matter. A trader intended to sell a basket worth far less than what was accidentally created in the order management system. While some controls worked, others did not block enough of the erroneous order before it reached the trading algorithm. The result was a large amount of unintended selling across European markets and a brief market disturbance.

The lesson is simple but brutal: partial controls are not the same as effective controls. A pop-up warning that can be overridden casually is not a serious safeguard. A real-time monitoring process that escalates too slowly is not real-time in any useful sense.

The London Metal Exchange nickel episode

The FCA’s 2025 enforcement action against the London Metal Exchange told a different story, but one that points in the same direction. In severe market stress, the exchange’s systems, escalation routes, and controls were not adequate to maintain orderly trading. Junior staff on duty did not have the training to recognize broader signs of disorder beyond obvious error trades or rogue algorithms.

That case matters because it shows that algorithmic risk is not limited to firms running strategies. Venue controls, volatility mechanisms, escalation frameworks, and operational resilience all shape market outcomes too. Market orderliness is a team sport, even when the team is mostly code and control rooms.

What all of this says about the FCA’s broader direction

The review fits neatly into the FCA’s broader supervisory strategy for principal trading firms. The regulator has already signaled that algorithmic controls, market conduct, and operational resilience are areas of continuing focus. It has also shown interest in how AI and machine learning may strengthen surveillance, particularly through its market abuse surveillance TechSprint.

In other words, the FCA is not anti-technology. It is anti-uncontrolled technology. That is a very different posture. The regulator appears willing to accept that markets are becoming more automated and more complex, but it expects firms to prove that governance and controls are evolving at the same pace.

What firms should do now

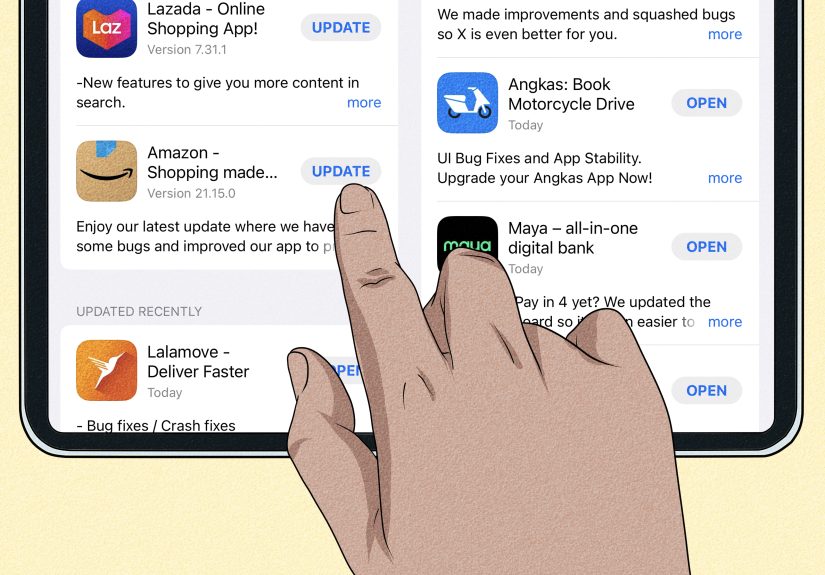

For firms reading between the regulatory lines, the action list is fairly obvious. First, refresh the RTS 6 self-assessment and make sure it is complete, evidence-based, and aligned to how the business actually operates. Second, invest in technical capability inside compliance and risk functions. Third, tighten change management so that material updates, approvals, and testing records are documented clearly. Fourth, review whether surveillance systems and staffing still match the nature, scale, and complexity of current trading activity.

Boards and senior managers should pay special attention here. The FCA’s message is not that algorithmic trading is a quirky technical corner of the business that can be delegated into silence. Senior leadership remains accountable. Firms need management information that is meaningful, not decorative. If the board receives glossy summaries while key surveillance backlogs, weak vendor understanding, or missing approvals sit in the background, governance has already failed.

The practical experience behind the headline: what this looks like inside firms

In practice, the experience of dealing with algorithmic trading controls rarely feels dramatic at first. It usually begins with something ordinary: a model update, a venue change, a parameter tweak, a new data source, a request to speed up deployment, or a compliance review that arrives five minutes before everyone wants to go home. Nobody says, “Today we will create a regulatory case study.” Yet that is exactly how many case studies begin.

On a typical trading desk, developers are focused on performance, traders are focused on execution quality, risk teams are focused on thresholds, and compliance is focused on whether the strategy behaves inside the rules. Each group thinks it is being reasonable. The friction starts when those perspectives are not stitched together. A developer may assume a control exists downstream. A trader may assume surveillance is calibrated for a new strategy. Compliance may assume a vendor model has already been validated in enough depth. Risk may assume someone else owns the documentation. The result is not always a spectacular blow-up. More often, it is a slow accumulation of ambiguity.

That ambiguity is exactly what the FCA seems to dislike. And honestly, fair enough. In well-run firms, algorithmic control frameworks are visible in daily behavior. Change approvals are clear. Inventories are current. Testing records are easy to retrieve. Surveillance teams can explain why alert thresholds are set where they are. Senior managers can describe which risks matter most and how they know the controls still work. Those firms may not be perfect, but they are legible. Regulators love legibility.

In weaker firms, the lived experience is very different. The documentation exists, but only in pieces. The model owner has changed twice. The inventory names a strategy but does not explain its intent. Material changes are decided informally. Escalation works, except when the key person is on vacation. Surveillance gets updated, but only after the business has already moved on. That kind of environment can function for longer than people expect, which is precisely why it is risky. Fragile systems often look fine until the day they are suddenly very not fine.

There is also a human experience buried inside all this regulation. Technical compliance staff are hard to hire. Trading systems are growing more complex. AI tools are entering development and surveillance workflows. Data pipelines multiply. Legacy code still lurks in corners like a raccoon in an attic. Firms are trying to move fast in markets that reward speed, while supervisors are asking for proof that speed has not outrun control. That tension is real, and it is not going away.

The smartest firms treat that tension as a management problem, not a legal annoyance. They recognize that good controls are not anti-innovation. They are what make innovation durable. A trading business with clear governance, disciplined testing, effective kill functionality, well-calibrated surveillance, and informed senior oversight is not just better positioned for an FCA visit. It is better positioned to survive its own complexity. And in algorithmic trading, survival is underrated.

Conclusion

The FCA’s findings on algorithmic trading controls should be read as more than a compliance memo for specialist firms. They are a broader statement about how modern markets should be governed. The regulator is effectively saying that if firms want the benefits of automation, they must also carry the burden of disciplined oversight.

That means up-to-date governance, technically capable compliance teams, rigorous testing, clearly owned controls, strong surveillance, and leadership that actually understands what the machines are doing. None of that is glamorous. But then again, neither is explaining to a regulator why your “temporary workaround” became a trading incident.

For firms that rely on algorithmic trading, the message is sharp but constructive. The FCA is not trying to ban innovation. It is trying to make sure innovation does not outrun market integrity. In a world where code can move faster than judgment, that is not old-fashioned regulation. It is common sense with a very large rulebook.