Table of Contents >> Show >> Hide

- What “bit color depth” means (without making your eyes glaze over)

- How LCDs produce color (and why bit depth isn’t the only knob)

- 6-bit, 8-bit, 10-bit: the “native” part vs the “marketing” part

- Color banding: why you see it, and why 10-bit helps

- HDR and “10-bit”: why they’re practically roommates

- Getting real 10-bit on your PC: the end-to-end checklist

- Buying advice: who actually needs higher bit depth?

- Troubleshooting: “Why can’t I select 10-bit?”

- Experiences from the real world: where bit depth shows up (and where it doesn’t)

- 1) The “Why does my gradient look cursed?” moment

- 2) Photo editing: skies are the ultimate lie detector

- 3) Gaming HDR: when 10-bit helps more than you expected

- 4) The refresh-rate trade: “Do I want 240 Hz or 10-bit?”

- 5) The dock dilemma: USB-C convenience vs color depth reality

- 6) The “8-bit + FRC is totally fine” realization

- Conclusion

Ever looked at a sunset photo on your monitor and thought, “Why does the sky look like it was frosted by a very

determined cake decorator?” That’s usually not your imaginationit’s your display (and sometimes your whole setup)

running out of color steps. The good news: once you understand bit color depth,

you can predict when “10-bit” matters, when it’s marketing glitter, and when you just need to toggle one setting

you’ve ignored since 2017.

This guide breaks down how LCD displays handle color, what 6-bit, 8-bit, and 10-bit

really mean, why you’ll see terms like 8-bit + FRC, and how to get the smoothest gradients for

gaming, HDR movies, and creative workwithout turning your life into a cable spreadsheet (unless you enjoy that sort

of thing, in which case… respect).

What “bit color depth” means (without making your eyes glaze over)

Bit depth is the number of discrete brightness levels a display can produce for each color channel:

red, green, and blue (RGB). You’ll often see it written as

bpc (bits per channel). More bits = more steps between dark and bright for each channel, which

usually means smoother gradients and fewer visible “bands.”

The math you can actually use

Each extra bit doubles the number of levels per channel. That adds up fast:

| Panel/Signal | Bits per channel | Levels per channel | Total possible colors (RGB) | Where you’ll typically see it |

|---|---|---|---|---|

| “6-bit” | 6 bpc | 64 | ~262,000 | Budget/older LCDs, office panels |

| “8-bit” | 8 bpc | 256 | ~16.7 million | Mainstream monitors, most SDR content |

| “10-bit” | 10 bpc | 1,024 | ~1.07 billion | HDR workflows, pro displays, higher-end monitors |

Important twist: a monitor can accept a 10-bit signal while the panel itself isn’t truly 10-bit. That’s

where the “plus” terms show up.

How LCDs produce color (and why bit depth isn’t the only knob)

Most LCDs use a backlight and a layer of liquid crystals that act like tiny shutters. The panel controls how much

light passes through subpixels (R, G, B) to create a final color. That control is driven by electronicscommonly a

scaler and a driver ICtranslating incoming video data into voltage changes for each subpixel.

Bit depth determines how many voltage “steps” the system can aim for. But the final picture also depends on:

- Color gamut (how wide a range of colors the display can showsRGB vs DCI-P3 vs Adobe RGB)

- Contrast ratio (how deep blacks are compared to bright whites)

- Calibration and color management (how accurately it hits target colors)

- Panel tech (IPS, VA, TNeach has trade-offs in viewing angles and contrast)

Think of it like cooking: bit depth is how many “spice levels” you can choose, but gamut is whether you own spices

beyond salt and pepper, and calibration is whether you’re measuring or just sprinkling with confidence.

6-bit, 8-bit, 10-bit: the “native” part vs the “marketing” part

Native bit depth

A native 8-bit panel can produce 256 levels per channel without relying on tricks. A

native 10-bit panel can do 1,024 levels per channelgreat for smooth gradients, shadow detail, and

HDR tone transitions.

8-bit + FRC (Frame Rate Control): the helpful illusion

Many monitors labeled “10-bit” are actually 8-bit + FRC. FRC is a type of temporal dithering:

the display rapidly alternates between nearby color values to simulate intermediate steps. To your eyes, it can look

like extra shadesespecially in gradientswithout the panel being truly 10-bit at every moment.

In practice, 8-bit + FRC can be very good. For most people, it reduces banding and looks “10-bit

enough” in real content. But there are caveats:

- Not identical to native 10-bit, especially in very smooth gradients and dark tones.

- Potential artifacts in edge cases (fine gradients, compression noise, certain refresh behaviors).

- Panel quality matterstwo “8-bit + FRC” monitors can look noticeably different.

Meanwhile, some budget panels are 6-bit + dithering to mimic 8-bit. That can be okay for spreadsheets

and web browsing, but it’s where “banding cake skies” tend to be born.

Color banding: why you see it, and why 10-bit helps

Color banding happens when a smooth gradient (like a blue sky) doesn’t have enough intermediate

steps, so it breaks into visible rings or stripes. Banding is more likely when:

- The content is heavily compressed (streaming video, low-bitrate uploads).

- The gradient is smooth and large (skies, studio backdrops, fog, shadows).

- The panel or signal path is limited to fewer steps (6-bit panels, 8-bit pipelines).

- The display is misconfigured (wrong output format, limited range, odd HDR toggles).

10-bit color (or a good 8-bit+FRC implementation) helps because there are more “stairs” between

dark and bright. Instead of stepping from shade #101 to #102 with a visible jump, you can step through many more

in-between shades so the transition looks continuous.

Bit depth vs gamut: don’t mix these up

A common confusion: bit depth is about how many steps you have; gamut is about how

far your colors can reach. You can have a wide-gamut monitor (DCI-P3) that’s still 8-bit, or a 10-bit monitor that’s

basically sRGB. The best option depends on what you do:

- Design/print/photo: wide gamut + accurate calibration often matters as much as bit depth.

- HDR video: 10-bit signal support is a big deal; gamut and brightness matter too.

- Everyday use: solid 8-bit (or 8-bit + FRC) is usually plenty if the panel is decent.

HDR and “10-bit”: why they’re practically roommates

Most mainstream HDR formats you’ll encounter on TVs and monitors expect at least a 10-bit signal.

That’s because HDR isn’t just “brighter”; it also needs smoother transitions to avoid ugly banding in highlights and

shadows. If you’ve seen HDR that looks like neon paint spilled on a flashlight, that’s often a mismatch between

content, tone mapping, panel capability, or settingsnot HDR as a concept.

Also: HDR labels vary wildly. Some displays can accept HDR signals but don’t have the contrast, brightness, or color

performance to deliver a convincing HDR experience. Bit depth is one piece of the HDR puzzle, not the entire puzzle box.

Getting real 10-bit on your PC: the end-to-end checklist

Here’s the part that trips people up: you don’t get “10-bit color” just because your monitor says so.

You need an end-to-end 10-bit pathpanel capability, signal output, OS behavior, app support, and enough

bandwidth over the cable at your chosen resolution/refresh rate.

1) Confirm what the panel actually is

- Native 10-bit: best-case for smooth gradients and pro work.

- 8-bit + FRC: often excellent; common in “10-bit” consumer monitors.

- 6-bit + dithering: expect more banding in gradients.

2) Check your GPU output settings

Your GPU control panel may allow selecting 8 bpc or 10 bpc. If 10 bpc is available,

try itespecially if your monitor uses FRC, because feeding it a higher-precision signal can help it simulate tones more cleanly.

3) Mind the bandwidth (resolution + refresh rate + bit depth)

Higher refresh rates and resolutions take more bandwidth. Sometimes 10-bit is available at 120 Hz but not 165/240 Hz,

unless you use compression features (like DSC) supported by both GPU and display. If you’re chasing maximum refresh

for esports, 8-bit might be the trade-off. If you’re editing or watching HDR films, you may prefer 10-bit at a lower

refresh rate.

4) Cable and connection type matter

DisplayPort and newer HDMI versions can both carry 10-bit signals, but your actual results depend on the specific

versions on your GPU and monitor, plus the mode you’re running (resolution/refresh/chroma format). If you’re using

adapters, docks, or questionable “it was $3 and a dream” cables, weird limitations can appear.

5) Application support: where “10-bit” becomes visible

Some apps and workflows benefit much more from 10-bit output:

- Photo editing: smoother gradients in skies and studio backdrops; less banding in heavy edits.

- Video color grading: cleaner ramps, better highlight/shadow transitions.

- HDR playback: reduces banding in HDR tone mapping.

- UI/graphic design: gradients can look more “buttery” (technical term).

Buying advice: who actually needs higher bit depth?

If you’re a gamer

For fast competitive play, refresh rate and motion handling often beat bit depth. A strong 8-bit panel is fine. But if

you play HDR single-player titles, story games, or cinematic games, 10-bit signal support (and decent HDR performance)

can meaningfully reduce banding and improve gradientsespecially in skies, fog, and shadow-heavy scenes.

If you’re a creator (photo/video/design)

You’ll benefit most when you have:

- Good calibration (factory calibration helps; proper profiling helps more)

- Stable viewing angles (often IPS-type panels)

- Wide-gamut support if your work requires it

- 10-bit pipeline for smoother gradients and fewer banding artifacts

In many real-world setups, a high-quality 8-bit + FRC panel plus strong calibration and color management

beats a “technically 10-bit” panel with mediocre uniformity or accuracy.

If you’re buying for office work

Bit depth usually isn’t the deciding factor. Prioritize text clarity, ergonomics, and glare handling. That said, if you

stare at gradients all day (dashboards, UI mocks, visualizations), an 8-bit panel (or 8-bit+FRC) can feel nicer than 6-bit.

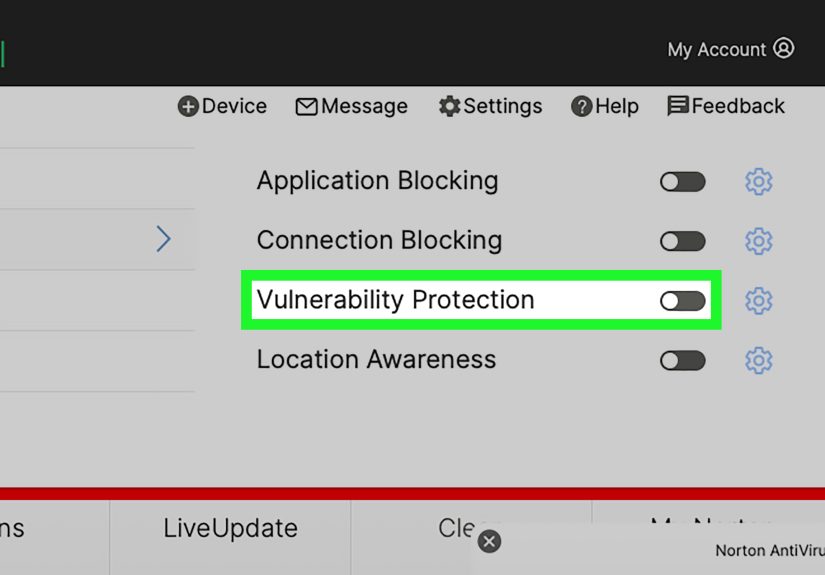

Troubleshooting: “Why can’t I select 10-bit?”

If 10 bpc is missing or greyed out, common reasons include:

- Bandwidth limits at your current resolution/refresh (try lowering refresh rate).

- Dock/adapter limitations (some USB-C docks don’t pass full bandwidth modes).

- Signal format constraints (some modes only allow 10/12-bit with certain chroma formats).

- Monitor OSD settings that cap bandwidth (certain “compatibility” modes).

- OS/HDR toggles affecting output mode (especially when HDR is enabled/disabled).

If enabling 10-bit makes the desktop look “washed out,” double-check full vs limited range settings, HDR state, and

color management profiles. Sometimes the problem isn’t the bit depthit’s the display pipeline choosing a different

output format than you expected.

Experiences from the real world: where bit depth shows up (and where it doesn’t)

Let’s make this practical with a few common, very human scenariosbecause “10-bit” is easy to understand in theory

and weirdly slippery in real life.

1) The “Why does my gradient look cursed?” moment

A designer exports a slick landing-page hero with a soft purple-to-blue gradient. On their laptop it looks smooth.

On a budget office monitor, it looks like a stack of colored napkins. That’s often a 6-bit panel

(or a low-quality dithering implementation) plus an 8-bit workflow. Switching to a better monitor (even a good 8-bit IPS)

can fix the problem instantlyno redesign required. The gradient wasn’t bad; the display just didn’t have enough steps

to render it gracefully.

2) Photo editing: skies are the ultimate lie detector

Photographers notice banding fastest in clear skies and studio backgrounds, especially after heavy edits (contrast,

dehaze, gradients, selective color). A 10-bit-capable workflow can reduce that “striped sky” effect, but only if the

pipeline cooperates. It’s common to buy a “10-bit” monitor and still see bandingbecause the GPU output stayed at 8 bpc,

or the connection mode silently dropped precision at a high refresh rate. The takeaway: the monitor matters, but so does

the path feeding it.

3) Gaming HDR: when 10-bit helps more than you expected

In HDR games, subtle shadow detail and highlight roll-offs can look rough on an 8-bit path, especially in foggy scenes,

sunsets, or dark interiors lit by neon signage. Players sometimes describe 10-bit HDR as looking “less noisy” or “more

natural,” even when they can’t point to a single color. What they’re often noticing is smoother tonal transitions:

fewer abrupt jumps in brightness and color. The funny part? Some people toggle 10-bit and swear everything changedwhen

the only real change was that banding in one specific scene got quieter.

4) The refresh-rate trade: “Do I want 240 Hz or 10-bit?”

High refresh rate is addictive. Once you’ve used 240 Hz, 60 Hz feels like dragging windows through peanut butter.

But at extreme refresh rates and high resolutions, bandwidth becomes the boss of your life. Users often discover that

10 bpc is available at 120/144 Hz but not at 165/240 Hz unless compression modes are supported and enabled. This is one

of the most common “Wait, my monitor says 10-bit!” complaints. The practical fix is choosing a priority:

ultra-high refresh for competitive play, or higher precision for HDR and creative work. Some people even save two profiles:

“Fast mode” and “Pretty mode.” (Both are valid life choices.)

5) The dock dilemma: USB-C convenience vs color depth reality

Modern desks love a single-cable setup: laptop + USB-C dock + monitor + peripherals. It’s clean. It’s elegant. It’s also

where color depth sometimes goes to take an unscheduled vacation. Certain docks or adapters can limit bandwidth or supported

output modes, which can quietly cap you at 8-bit even if your monitor and GPU can do more. People often troubleshoot for

hours before trying the simplest test: connect directly (DisplayPort/HDMI) and see if 10 bpc appears. If it does, the dock

is the bottlenecknot your monitor, not your GPU, not the universe personally targeting you.

6) The “8-bit + FRC is totally fine” realization

Many users go shopping thinking native 10-bit is the only “real” option. Then they see a high-quality 8-bit + FRC panel

that looks fantastic in gradients, games, and HDR demos. In day-to-day use, the difference between native 10-bit and

good FRC can be subtlesometimes invisibleunless you’re pushing specific content (professional grading, precision ramps,

heavy shadow work). The more important lesson: don’t fixate on one spec. Uniformity, calibration, contrast behavior,

and panel quality can matter more than the last bit of bit depth for most workflows.

Conclusion

Bit color depth is one of those specs that’s both simple (more steps = smoother gradients) and sneaky (your whole setup

has to support it). For most people, a good 8-bit panelor a well-implemented 8-bit + FRC

monitordelivers excellent results. If you work with gradients professionally, grade video, or care about HDR looking smooth

instead of “banded,” then 10-bit signal support and a clean end-to-end pipeline are worth chasing.

The best move is to treat “10-bit” like a checklist item, not a magic spell. Confirm the panel type, set the GPU output

correctly, watch your bandwidth limits, and you’ll get the benefits you’re paying forwithout the frosted-cake sky.