Table of Contents >> Show >> Hide

- Meet the “Toy”: Teachable Machine (Which Is Not Actually a Toy)

- The Bigger Trend: Machine Learning Is Becoming a New Kind of Programming

- Why Google Keeps Shipping Playful ML Demos

- The Wild Future of Machine Learning, According to Google’s “Toys”

- What This Means for Creators, Businesses, and Anyone With a Webcam

- How to Try Google’s “Stunning New Toy” Today (Without Becoming a Dataset Horror Story)

- Conclusion: The Future Is Interactive (and a Little Bit Ridiculous)

Every once in a while, Google releases something that looks like a harmless science-fair projectthen quietly

changes how an entire industry thinks. Think of it as the “free sample” strategy, but for the future.

One of the best examples is Google’s Teachable Machine: a playful, webcam-powered tool that lets you

“teach” a computer to recognize images, sounds, or body posesoften in minutes, with zero code. It feels like a toy

because it’s fun. It feels like magic because it works. And it’s a sneak preview of where

machine learning is headed: away from “programming” and toward teaching.

In this article, we’ll unpack what Google’s ML toys reveal about the future of machine learningfrom

no-code model training and multimodal AI, to agentic browsers, world models, and robots that can actually do the thing

you asked for (instead of writing a 12-paragraph apology about why they can’t).

Meet the “Toy”: Teachable Machine (Which Is Not Actually a Toy)

What it does in plain English

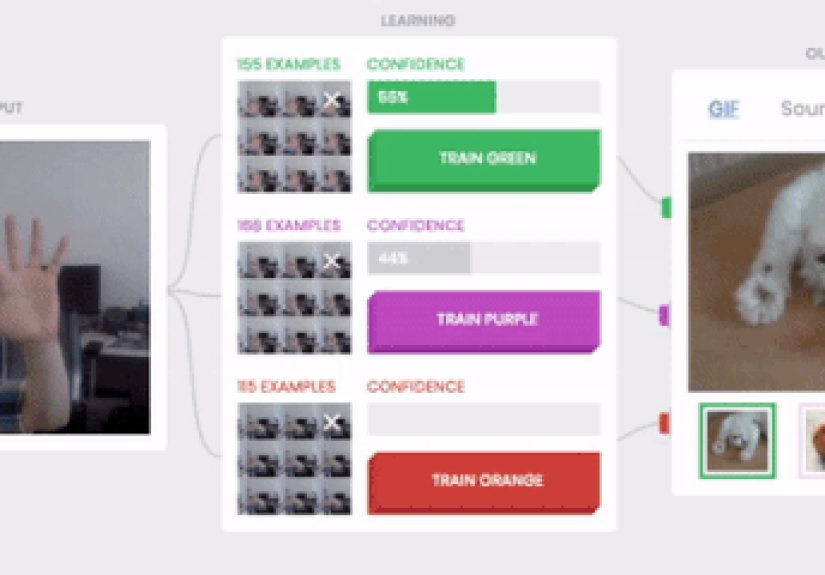

Teachable Machine is a web-based tool that helps you build simple machine learning models by example. You create a few

categories (“classes”), show the camera or microphone some samples, hit Train, and then test the model live.

You can export what you trained and use it in websites, apps, or creative projects.

The genius is the interface: instead of asking beginners to understand gradients, tensors, and why the loss curve looks

like a ski slope, Teachable Machine lets you start with an instinct everyone already has: show and tell.

If you can teach a toddler the difference between a cat and a dog, you can teach a model a beginner-friendly version of the same idea.

The secret sauce: transfer learning, hiding in your browser

Under the hood, Teachable Machine leans on a powerful trick: transfer learning. Instead of training a model

from scratch (which is expensive, slow, and emotionally damaging), it reuses a pretrained neural network and fine-tunes the last layers

for your custom categories. That’s why it can learn quickly from a small dataset and why it feels so responsive.

Google has also leaned into on-device and in-browser ML for these experiences. In the earlier Teachable Machine versions,

training could happen locally, which makes demos feel instant and also hints at a privacy-friendly direction: fewer raw samples shipped off

to a distant data center just to get a prediction.

Why it matters: machine learning becomes “clickable”

Teachable Machine isn’t just an educational toolit’s a UI prototype for the future. It shows what happens when

machine learning becomes a user interface: you don’t configure software so much as you shape a model’s behavior.

And that shift is enormous.

When ML turns into a clickable experience, suddenly “building AI” isn’t limited to engineers. Teachers, designers, marketers,

hobbyists, and students can experiment with computer vision, audio classification, and simple

pose detection without needing a PhD or a support group.

The Bigger Trend: Machine Learning Is Becoming a New Kind of Programming

From instructions to examples

Traditional programming is explicit: “If X, do Y.” Machine learning is statistical: “Here are examples of X and not-X;

learn the boundary.” Google’s ML toys make that difference visceral.

In practice, that means the “code” is increasingly the dataset: what you choose to show the model, what you leave out,

and how consistent your examples are. If your samples are chaotic, your model will become a chaos connoisseur.

Human-in-the-loop, gamified

Google has a long history of turning ML training concepts into interactive experiences. “Quick, Draw!” is a classic:

you doodle a prompt in 20 seconds, and a neural network tries to guess what you’re drawing. It’s fun, slightly stressful,

and secretly educational: you can feel the model generalizing in real time, sometimes brilliantly, sometimes with the confidence

of a guy explaining a movie he hasn’t seen.

These playful systems also demonstrate a deeper reality: many ML breakthroughs aren’t just about bigger modelsthey’re about

better feedback loops. When humans interact with models, the data gets richer, edge cases surface, and the system improves.

(It’s the wholesome version of “the internet trained it,” which is a phrase that usually ends badly.)

Why Google Keeps Shipping Playful ML Demos

Because AI literacy is now a survival skill

The world is flooded with AI featuressome helpful, some questionable, some clearly invented during a meeting where nobody said “no.”

Tools like Teachable Machine help people understand the basics: bias, overfitting, false positives, data quality, and why

“it worked once” is not a deployment strategy.

Because playful prototypes become serious product patterns

Google’s experiments often foreshadow mainstream features. The “toy” interfaceshow the model examples, get instant feedback,

iterate quicklyshows up everywhere now: no-code ML platforms, automated labeling tools, and product workflows where humans guide

models with small, high-signal inputs.

Even the broader Google ecosystem echoes this direction: from web ML demos built with JavaScript ML libraries, to cloud platforms

that package training, evaluation, and deployment into increasingly “buttoned-up” experiences for teams that want outcomes, not research projects.

Because Google is betting on multimodal, agentic AI

Teachable Machine is “teach it with examples.” The newest wave is “show it the world.” Google has been pushing

multimodal AI that can understand text, images, audio, and video togetherespecially through Gemini’s real-time experiences.

When an assistant can see your screen or camera feed and respond conversationally, it’s basically Teachable Machine’s philosophy scaled up:

context becomes the interface.

The Wild Future of Machine Learning, According to Google’s “Toys”

1) Multimodal assistants that understand what you’re looking at

A major leap in ML isn’t just smarter textit’s AI that understands live context. Google has been rolling multimodal features into

Gemini experiences, including real-time conversations where you can share your camera view or screen and get immediate responses.

This changes the “input problem.” Instead of typing a perfect prompt, you can point at the messy reality:

a confusing product page, a broken appliance, a math problem, or a paint color that looks different in every room because sunlight is a liar.

The model’s job becomes: interpret, reason, and respond with useful next steps.

2) Agentic AI: from answering questions to doing the work

Here’s where things get spicy: Google has been experimenting with AI agents that can operate in a browser,

handle multi-step tasks, and complete workflows like research, form-filling, planning, and comparison shopping.

In early 2026, Google began adding more agent-like capabilities into Chrome for U.S. users on certain AI subscriptions,

signaling that “the browser” is becoming an action layernot just a window.

If Teachable Machine is “train a mini model,” agentic browsing is “train the assistant to navigate your digital life.”

The scary part isn’t that it can click buttons. The scary part is that it can click buttons confidently.

That’s why responsible designs keep humans in the loop for final actions (like purchases and posting).

3) World models: AI that can simulate and predict

Another frontier is the rise of world models: systems that generate interactive environments and maintain

consistency over timeremembering what changed, what moved, and what should still be there later.

This matters because the real world has continuity. If an AI can’t track “I put the keys on the table,” it can’t reliably help you.

(It also can’t reliably win at “find my stuff,” which is humanity’s hardest daily game.)

Interactive world modeling is more than a flashy demo. It’s a training ground for agents that need to plan, test actions safely,

and develop something like intuition about physical cause-and-effectbefore they’re unleashed on your kitchen.

4) Robotics: language-to-action, with fewer faceplants

Google DeepMind has been showcasing robotics work that connects language understanding to physical action:

robots that can interpret instructions and perform tasks in dynamic environments. When paired with multimodal perception,

the “toy” becomes real-world capable: the system sees the objects, understands the goal, and executes steps with feedback.

The direction is clear: robotics is becoming less about rigid scripts and more about adaptable policies guided by AI models.

The long-term dream is a generalist robot that can handle novel tasks the way humans doby reasoning, not memorizing.

5) Generative media: creativity tools with ML as the engine

Google has also pushed into generative AI for images, video, and music. The pattern matches the “toy” philosophy:

make the experience approachable, then let creators iterate quickly. Video generation, higher-quality image generation,

and “sandbox” tools for music all point to a future where ML is a creative collaboratorfast, flexible, and occasionally weird in a delightful way.

The practical impact is huge: faster prototyping, lower production barriers, and a new wave of creative experimentation.

The risks are also huge: misinformation, synthetic media abuse, and a growing need for provenance, watermarking,

and platform policies that don’t crumble the moment someone discovers “Upload” buttons exist.

What This Means for Creators, Businesses, and Anyone With a Webcam

No-code AI is realand it’s moving upstream

The biggest takeaway from Google’s ML toys is that no-code AI isn’t just a trendit’s an interface shift. When model-building

becomes easy, more people can prototype ideas: interactive installations, accessibility tools, classroom demos, retail experiences,

and lightweight internal automation.

For businesses, this creates a new opportunity (and a new headache): empowering teams to test ML-driven ideas quickly,

while still maintaining guardrails around privacy, security, and data governance.

Data quality beats hype every time

Teachable Machine teaches a lesson that scales to the enterprise: your model is only as good as the examples you give it.

If your training samples are biased, your predictions will be biased. If your categories are fuzzy, your results will be fuzzy.

If your dataset is tiny and repetitive, your model will “learn” your lighting conditions more than your concept.

Responsible ML becomes non-negotiable

As ML becomes easier to deploy, the stakes rise. Bias, privacy, and misuse aren’t theoreticalthey’re operational.

That’s why the future of machine learning isn’t only about better models. It’s also about better evaluation, transparency,

user consent, and safety designespecially as agents gain the power to act, not just respond.

How to Try Google’s “Stunning New Toy” Today (Without Becoming a Dataset Horror Story)

A 10-minute mini-project you can actually finish

- Pick a tiny problem: “Recognize my coffee mug vs. not my coffee mug.” Keep it simple.

- Create 2–3 classes: Mug / Not Mug (and maybe “Other Mug” if you live with mug thieves).

- Collect balanced samples: Vary angles, distance, and lighting. Don’t accidentally train on your kitchen counter.

- Train and test: Look for where it fails. Add examples specifically for those failure cases.

- Export the model: If you’re building something, plug it into a lightweight web demo.

- Add guardrails: Clearly label what it can and can’t do. Treat it like a prototype, not a judge.

The point isn’t to build a perfect model. The point is to feel the workflow: examples → training → testing → iteration.

That loop is the heartbeat of modern machine learning, whether you’re teaching a browser demo or shipping AI into a product.

Conclusion: The Future Is Interactive (and a Little Bit Ridiculous)

Google’s “stunning new toy” isn’t just Teachable Machineit’s the entire idea that machine learning can be playful,

approachable, and built by showing instead of coding. These experiences are fun on purpose, because fun lowers the barrier to entry.

And once you lower the barrier, the future walks right in and makes itself comfortable.

Today, the “toy” is a no-code ML demo. Tomorrow, it’s a multimodal assistant that sees what you see. Next, it’s an agent that does the work

inside your browser. After that, it’s a world model that can simulate outcomes and a robot that can execute plans safely.

The wild future of machine learning isn’t only smarter predictionsit’s systems that interact, adapt, and act.

Hands-On Experiences You Can Try (About )

Want the future to feel less like a headline and more like something you can poke with a stick? Here are five hands-on experiences

that mirror where Google-style machine learning is goingwithout requiring you to remortgage your home for GPUs.

1) The “Lighting Betrayal” experiment: Train a simple image classifier (mug vs. not mug) and test it in three places:

bright daylight, warm indoor light, and the mysterious half-shadow of a desk lamp. You’ll discover a core ML truth:

models don’t see “mug,” they see patterns. Change the patterns enough and your mug becomes “not mug,” emotionally and statistically.

The lesson: collect diverse samples or your model will become a connoisseur of a single corner of reality.

2) The “Accent Chaos” audio test: Use a sound classifier for claps vs. snaps vs. whistles. Then have two people try it.

Different hands, different microphones, different rooms. Your model will behave like it has opinions. The point isn’t to “fix” it forever,

but to feel why robust ML needs variability and why production ML needs monitoring. Also, it’s a great way to turn a meeting into a percussion jam.

3) The “Pose Detective” mini game: Train a pose model on “hands up” vs. “hands down” and build a tiny browser game:

hands up = jump, hands down = duck. You’ll see how ML becomes a control surfaceyour body becomes the controller. This is the same UX shift

behind multimodal assistants: interaction becomes natural, continuous, and sometimes hilariously wrong when the camera catches you mid-sneeze.

4) The “Agent, but keep it on a leash” workflow: Try an AI assistant feature that summarizes, compares, or drafts based on what’s on your screen.

Give it a contained task: “Compare these two product pages and list differences.” Then verify manually. You’ll get a feel for the near future:

AI as a co-pilot that accelerates work, while humans stay responsible for final decisions. Think of it as a very fast intern who occasionally hallucinates

a third product you never asked about.

5) The “Synthetic creativity” drill: Generate a few concept images or short video ideas and then do the human part: critique, iterate, and add intent.

The key experience is learning how quickly creative exploration expands when production friction drops. The other key experience is learning restraint:

the best outputs come from clear goals, strong taste, and knowing when to stop prompting and start editing.

Put together, these experiences are the headline in miniature: ML is becoming interactive, multimodal, and increasingly action-oriented.

Today you teach a small model with a webcam. Soon you’ll teach systems with context: screens, cameras, workflows, and goals. And that’s why

Google’s “toy” mattersit’s not a distraction. It’s a direction.