Table of Contents >> Show >> Hide

- Why IBM’s Next Leap Matters

- IBM Has Already Moved Beyond the “Cute Demo” Stage

- Heron Was Important. Nighthawk Looks Even More Interesting.

- The Real Game-Changer Is Error Correction

- From Loon to Kookaburra to Starling

- Quantum Plus HPC Is the Actual Story

- Where More Powerful IBM Quantum Systems Could Matter First

- What Still Stands in the Way

- Why This Moment Feels Different

- Experience Section: What It Feels Like to Work Around a More Powerful IBM Quantum Platform

- Conclusion

Quantum computing has spent years sounding like the world’s smartest unfinished sentence. Every few months, someone announces a breakthrough, the internet nods politely, and then everyone goes back to their very classical spreadsheets. But IBM’s latest roadmap suggests the story is changing. Fast.

IBM is not just promising “more qubits,” which in quantum computing is a bit like saying a restaurant improved by adding more chairs. Nice, maybe, but not the point. What matters now is whether those qubits are better connected, more stable, more accurate, and easier to scale into systems that can do useful work. By that standard, IBM’s quantum computing platform is entering a far more serious phase.

The company’s current generation of hardware has already pushed deeper into utility-scale experiments, and its next steps are aimed at something even more important: turning quantum computing from an impressive lab demonstration into a practical accelerator for science and industry. That is why IBM’s quantum computing is about to get much more powerful. The power boost is not only in raw size. It is in architecture, error correction, modular design, software, and the growing ability to pair quantum systems with classical high-performance computing.

Why IBM’s Next Leap Matters

For years, the public conversation around quantum computing focused on qubit counts. That made sense early on because reaching 50, then 100, then 1,000 qubits felt like climbing a mountain no one had previously seen clearly. But the field has matured. Today, qubit count alone is not enough to impress serious researchers, and frankly, it should not impress readers either.

A quantum computer becomes more useful when it can run deeper circuits with lower error rates, support more complex interactions among qubits, and fit into workflows with classical systems that already do most of the heavy lifting. In other words, a quantum computer has to stop being a science-fair volcano and start becoming part of an actual computing stack.

IBM seems to understand this better than most. Its recent hardware and software moves show a strategy built around performance, not just spectacle. That includes more accurate processors, better qubit connectivity, faster execution, new mitigation tools, and a roadmap toward fault-tolerant systems that can handle truly large workloads.

IBM Has Already Moved Beyond the “Cute Demo” Stage

One reason IBM’s roadmap is getting so much attention is that the company is no longer speaking only in distant ambitions. It is stacking new plans on top of demonstrated progress. Its Heron-class processors have already been used to run certain classes of quantum circuits with up to 5,000 two-qubit gates, which is a significant jump in the scale of accurate computation. That matters because deeper circuits are how researchers start probing more realistic problems in chemistry, materials science, and optimization.

Think of it this way: a shallow quantum circuit is like a student piano recital. It proves the instrument works. A deeper circuit is the full concert, where mistakes become painfully obvious. IBM’s claim is that its latest systems can stay coherent and accurate longer, which makes them better suited to real experiments rather than quantum theater.

That progress is tied not only to hardware improvements, but also to the software layer. IBM has invested heavily in Qiskit, its open-source quantum software stack, and in runtime tools that help users build, optimize, and execute workloads more efficiently. The result is that improved chips and improved software are now moving together instead of in separate lanes. That pairing is one of the strongest reasons the platform is becoming more powerful in practice, not just on slides.

Heron Was Important. Nighthawk Looks Even More Interesting.

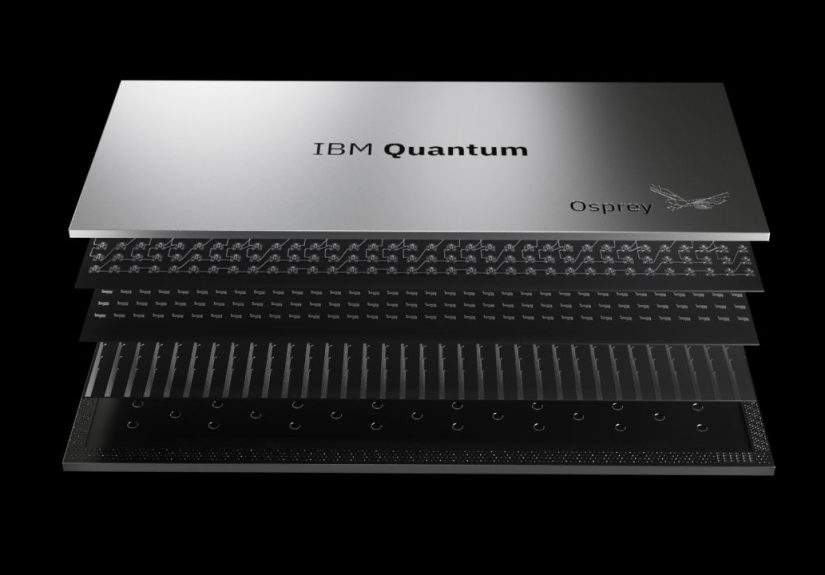

If Heron helped prove that IBM could deliver utility-scale performance, Nighthawk is shaping up to be the hardware line that expands what users can actually try. IBM describes Nighthawk as a higher-connectivity processor, and that phrase matters more than it might sound.

In a quantum processor, connectivity affects how efficiently qubits can interact. Better connectivity can reduce the overhead required to move information around a chip, which in turn can help preserve fidelity. That means developers may be able to run more complex workloads without paying as steep an error penalty. In plain English: less awkward shuffling, less chaos, more useful computation.

Nighthawk is also part of IBM’s broader modular strategy. Instead of building one giant monolithic chip and hoping physics cooperates, IBM is designing systems that can connect modules together over time. That is a more practical engineering path. It mirrors how classical computing evolved: not by waiting for one magical machine, but by building better systems architecture, packaging, interconnects, and software orchestration.

This modular approach is a major reason IBM’s quantum computing is about to get much more powerful. Power is coming from the ability to connect pieces of a system more intelligently, not merely from stuffing more fragile qubits into a single package and praying to the cryogenic gods.

The Real Game-Changer Is Error Correction

If you remember one thing about quantum computing, remember this: errors are the villain. Every ambitious quantum roadmap eventually runs into the same problem. Qubits are delicate. They drift, decohere, misfire, and generally behave like brilliant employees who also happen to panic under fluorescent lighting.

That is why error correction is the central challenge in the entire field. A useful large-scale quantum computer cannot depend on perfect physical qubits, because those do not exist. Instead, it needs logical qubits built from many physical qubits in a way that detects and corrects errors continuously.

IBM’s newer strategy leans into quantum low-density parity-check, or qLDPC, codes. The broad idea is to reduce the overhead needed for fault tolerance compared with older approaches that demanded an enormous number of physical qubits per logical qubit. That matters because the economics of quantum computing change dramatically if the error-correction burden drops. Suddenly, the road to a practical machine looks like an engineering marathon instead of a sci-fi trilogy.

This is where IBM’s roadmap starts to sound less like marketing and more like systems design. The company is not simply saying, “Trust us, fault tolerance is coming.” It is laying out intermediate hardware steps meant to test couplers, packaging, logical modules, and memory. Those are the boring-sounding details that usually separate a real platform from a glossy keynote.

From Loon to Kookaburra to Starling

IBM’s roadmap includes a flock of processor names that sound like they escaped from a very ambitious bird sanctuary: Loon, Nighthawk, Kookaburra, Cockatoo, and Starling. Cute branding aside, these names represent an important progression.

Loon is intended to test key architectural features for fault-tolerant quantum computing, especially around couplers and packaging. Kookaburra is expected to demonstrate a module that combines a logical processing unit with quantum memory. That is a big conceptual step because it pushes IBM closer to the building blocks needed for a modular, fault-tolerant system rather than a one-off experimental device.

Then comes Starling, IBM’s planned large-scale fault-tolerant quantum computer. The company has said Starling is targeted to run on the order of 100 million quantum operations using 200 logical qubits. Those numbers are important not because they are large for bragging rights, but because they suggest workloads that go beyond today’s narrow demonstrations.

If IBM hits that target, the conversation around quantum computing changes. The question will no longer be whether quantum hardware can do impressive niche tricks. It will be whether certain real-world tasks are now meaningfully better on a quantum-centric system than on classical-only approaches.

Quantum Plus HPC Is the Actual Story

The smartest part of IBM’s strategy may be that it does not frame quantum computers as replacements for classical machines. Instead, IBM keeps talking about quantum plus HPC, meaning quantum processors working alongside classical high-performance computing resources.

That is a much more believable vision than the old fantasy where quantum computers suddenly devour every supercomputer on Earth. In reality, quantum systems are likely to serve as specialized accelerators inside hybrid workflows. Classical hardware will still handle control logic, data preparation, optimization loops, simulation shortcuts, and verification steps. Quantum hardware will tackle the subproblems where quantum mechanics gives it an edge.

This hybrid model is powerful because it lowers the bar for practical usefulness. IBM does not need quantum machines to beat classical machines at everything. It needs them to beat classical-only workflows at enough valuable tasks to matter. That is a far more realistic path to quantum advantage, and it aligns well with how enterprise computing actually works.

Where More Powerful IBM Quantum Systems Could Matter First

Chemistry and Materials Discovery

Quantum systems are naturally good at representing quantum systems. That makes chemistry a prime candidate. Molecules, catalysts, reaction pathways, and advanced materials all involve interactions that grow difficult for classical simulation as complexity rises. IBM has repeatedly positioned chemistry and materials science as leading areas for early impact, and for good reason.

If researchers can model molecular behavior more efficiently, they may accelerate the search for better batteries, improved semiconductors, specialty chemicals, and new pharmaceutical candidates. Nobody should expect quantum computers to invent a miracle drug by Tuesday, but they may increasingly help scientists narrow the search space faster.

Optimization

Optimization problems are everywhere: logistics, scheduling, portfolio construction, manufacturing, routing, supply chains. These are the kinds of problems that cause executives to say things like “Surely there must be a better way,” right before opening a spreadsheet with 47 tabs.

Quantum optimization is still a developing area, and experts remain careful about when and where true advantage will appear. But IBM has continued exploring optimization workflows because they map well to hybrid computing. A stronger quantum system with better connectivity and better error mitigation may help on carefully chosen, high-value problem classes.

Scientific Simulation

As systems improve, quantum simulation could become one of the clearest proofs of usefulness. Rather than solving every type of commercial problem immediately, IBM may first win by helping researchers study physical systems that are exceptionally hard to model classically. That is not a small niche. It is the foundation of much of modern chemistry, condensed matter research, and advanced materials design.

What Still Stands in the Way

Now for the part where we put the pom-poms down for a moment.

Quantum computing still faces major obstacles. Error rates must continue falling. Control electronics and cryogenic systems must scale efficiently. Software tooling has to mature. Verification remains difficult. And the industry still needs clear, agreed-upon demonstrations of quantum advantage on meaningful workloads, not just carefully curated benchmarks.

There is also the risk of overpromising. Quantum history is full of headlines that arrived five years earlier than reality. IBM’s roadmap is serious, but it is still a roadmap, not a time machine. Engineering schedules slip. Technical surprises happen. Physics does not care about product launches.

Still, the tone of the field has changed. The discussion is no longer just “Can anyone build enough qubits?” It is “Which architecture is most likely to scale into a fault-tolerant system that solves useful problems?” That is a more mature, more demanding, and more interesting question.

Why This Moment Feels Different

IBM’s quantum computing is about to get much more powerful because multiple layers of the stack are improving at once. The hardware is getting better. The architecture is getting smarter. The software is becoming more practical. The systems are being designed for modular growth. Error correction is moving from abstract theory toward implementation. And the roadmap increasingly centers on useful workloads rather than vague future glory.

That does not mean we are waking up tomorrow in a fully quantum world. Your phone will still be annoyingly classical. Your laptop will still overheat during too many browser tabs. But inside research labs, enterprise pilots, and cloud-access quantum platforms, the shift is already underway.

The biggest change is not that IBM is building a bigger machine. It is that IBM is building a more believable path from today’s quantum utility experiments to tomorrow’s fault-tolerant, application-scale computing. In this industry, belief matters. Not blind belief, but technical credibility. And right now, IBM appears to have more of it than many competitors when it comes to connecting present performance with future scale.

So yes, IBM’s quantum computing is about to get much more powerful. Not in a comic-book way. In a better way. In the way that counts: more depth, more structure, more integration, more reliability, and a clearer route to solving problems that classical systems alone struggle to handle.

Experience Section: What It Feels Like to Work Around a More Powerful IBM Quantum Platform

For people outside the field, a stronger IBM quantum platform may sound abstract, like hearing that a telescope got better mirrors. Cool, probably important, but hard to feel. For researchers, developers, and enterprise teams experimenting with quantum workflows, the experience is much more concrete.

First, there is a psychological shift. A few years ago, many users approached cloud quantum systems like museum exhibits. They were fascinating, educational, and slightly fragile. You would test a circuit, admire the result, and accept that the hardware might not yet support anything close to your full ambition. As IBM’s systems improve, that relationship changes. Users begin to treat the platform less like a novelty and more like an instrument. That is a huge difference. Scientists trust instruments. They only politely admire novelties.

Second, better hardware changes the rhythm of experimentation. When circuits can run deeper with more stability, researchers spend less time trimming ideas down to fit the machine and more time asking whether the machine can reveal something genuinely interesting. In chemistry, for example, the practical experience shifts from “Can I make this toy model run at all?” to “Can I now test a larger, more realistic molecular setup and compare it against classical approximations?” That is the kind of upgrade that makes long-term users sit up straighter in their chairs.

Third, stronger IBM systems improve collaboration between quantum specialists and classical computing teams. That may sound dull, but it is actually where the action is. Most enterprise users do not want a mysterious quantum box that requires a priesthood to operate. They want workflows, toolchains, benchmarks, profiling tools, and ways to connect quantum jobs with existing HPC infrastructure. As IBM keeps pushing quantum plus HPC, the experience becomes less about isolated quantum experiments and more about integrating QPUs into normal research pipelines.

There is also a noticeable shift in ambition. When a platform becomes more reliable, people stop asking only whether it works and start asking what it is worth. That changes meeting agendas. Instead of talking about access and curiosity, teams begin discussing use cases, return on effort, domain-specific algorithms, and whether a given workload might plausibly show a scientific or commercial edge. That is when a technology starts maturing.

For developers using Qiskit and related tools, the experience of a more powerful IBM quantum environment is also about reduced friction. Better compilation, smarter runtime tools, and application-focused functions mean users can spend more time on the problem and less time wrestling with the plumbing. No one gets into quantum computing because they dream of debugging everything forever. They get into it because they want to explore new computational territory.

Most of all, the lived experience becomes more serious. A stronger IBM platform will not make every quantum promise come true overnight, but it does make the day-to-day work feel less speculative. The field begins to feel less like waiting for the future and more like carefully building it. That is the kind of experience researchers remember: the moment a technology stops being mostly potential and starts becoming a tool.

Conclusion

IBM’s next phase in quantum computing is not about flashy numbers alone. It is about delivering better processors, stronger connectivity, smarter modular design, hybrid quantum-HPC workflows, and a credible march toward fault tolerance. If the company continues hitting its roadmap milestones, the industry may look back on this period as the moment quantum computing stopped being mostly a promise and started becoming a practical platform for discovery.